Data modelling is the art of abstracting aspects of reality relevant to a given problem and then representing those aspects in a database schema or collection of classes or other concrete form. Data modellers thus deal with several different worlds simultaneously:

- The problem domain, a part of the real world (or at least a part of the world considered “real” from the point of view of the system being designed);

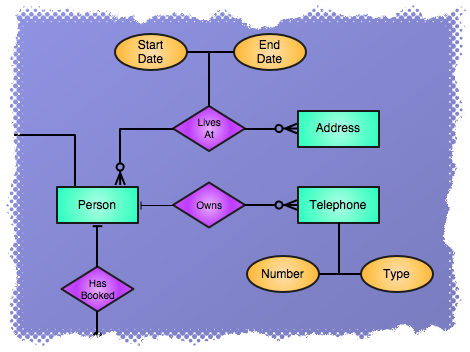

- The conceptual schema, an abstract model describing the problem domain in terms of entities and their properties and relationships;

- The logical schema, which in this article will mean a relational database schema

(There are other layers that might be considered too, such as the physical schema, which describes how the logical schema is implemented in some specific database management system. This won’t concern us at all here.)

Analysing a problem domain and building conceptual and logical schemata to capture its essence is an art rather than a science. The outcome of any particular attempt will tend to depend on subtle - or not so subtle - differences in judgement about the relative likelihood of different ways in which the parts of the real world being analysed can vary, and the signficance or otherwise of those variations to the operation of the system which will be built on top of the logical schema. People also vary in their skill at modelling and their practical and aesthetic preferences for one design option or another. This means that multiple attempts at modelling the same domain will almost certainly result in divergent conceptual schemata, and the subsequent logical schemata are likely to be wildly divergent.

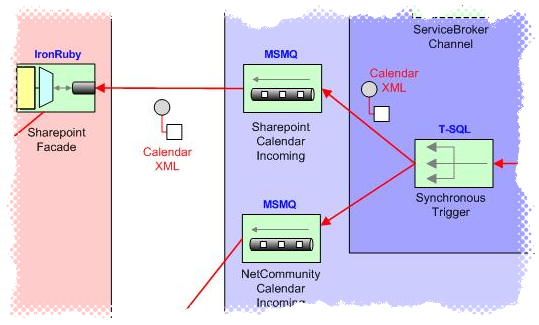

Real organisations tend to face problems in multiple domains which overlap to varying degrees. Typically they build or acquire separate systems for dealing with each domain - the cost of developing a system which encompasses the union of all the problem domains is almost always prohibitive - and then later face the meta-problem of enabling the flow of data - or control - between the separate systems. To pick an example from the organisation with which I’m most familiar, an independent school might have an academic database (which stores details of teachers, pupils, pupils’ contacts, timetables, assessment and attendance data and so forth), a network directory (which stores details about user permissions, home folders and so on), a personnel system, an alumni database and possibly one or more commercial databases. Making these database systems work together is the problem of enterprise integration.

It’s now commonly accepted that the most promising approach to large-scale integration problems is to connect the component applications with an asynchronous message-passing system. Events in one component system generate messages that are sent over the integration infrastructure to other systems and on reception at each target system trigger events there. For example, a change to a contact’s address in the first system will trigger the sending of a notification message which will make its way to all the other systems that share the contact, and these systems will then update their own address records. It’s often possible to add the required messaging endpoints to each component system without access to the application’s source code, for example by directly adding triggers to relevant tables. The integration system will contain components that translate, route, aggregate, decompose and otherwise transform the messages in transit. Integration systems designed along these lines have a minimal effect on each component’s performance, and also provide the compelling benefits of loose coupling, resilience in the face of component shutdowns or crashes, and clarity of structure.

However, even when the integration architecture has been designed, it’s often not clear exactly what information the actual messages should contain. As I’ll describe in the next part, the key to message design is thinking clearly about the conceptual and logical levels of abstraction for the whole system and its constituent parts.

Leave a comment